Can AR Glasses Replace the Smartphone?

.

Written by zeepada | Edited by @syntaxional

When did the smartphone become a thing we have to carry at all times?

When IBM released the first smartphone, the "Simon," in 1994, it had a touchscreen and email, and the market shrugged. In 2007, Steve Jobs walked onstage with the iPhone. Nearly two decades later, roughly 5.7 billion people spend an average of four-plus hours a day staring at a small screen.

But history tells us that no dominant technology reigns forever. PCs ceded their throne as the center of personal computing to mobile. Feature phones gave way to smartphones. Today, every major player in tech is asking the same question: What comes next?

This article isn't an answer to that question, it's an exploration. And maybe the reason we keep coming up short is that we've been asking the wrong question all along.

1. The Race for the Next Device

We've all witnessed firsthand the scale of markets that smartphones created and destroyed. The fall of Nokia. The birth of the app economy. It's no wonder everyone's trying to catch the next wave.

Over the past few years, we've seen no shortage of candidates. Smartwatches evolved into sophisticated health sensors, but they haven't fundamentally reshaped daily life the way PCs or smartphones did. VR headsets are brilliant for gaming and specialized use cases, but they're too isolating and heavy for everyday wear.

Then 2024 brought two fascinating approaches. Humane unveiled the AI Pin, while Meta's Ray-Ban smart glasses which is already on the market were being redefined around AI into something fundamentally different. Both devices set out to solve a similar problem but took very different paths, offering us a useful lens for exploring what the next device actually needs to be.

2. Humane AI Pin's Stumble, Meta Ray-Ban's Rise

The Humane AI Pin arrived with enormous hype. A $699 wearable combining a voice-first AI assistant with a laser projector that beamed information onto your palm.

It didn't last a year before being acquired by HP and discontinued. The most obvious limitation was the display experience. Palm projection was heavily dependent on lighting conditions and couldn't support always-on information access. We're visual creatures. Reading, scanning, and comparing are things voice alone simply can't replace. There was also a strategic misstep: the AI Pin positioned itself as a smartphone replacement rather than a companion, which set an impossibly high bar for adoption. That said, the core concept still attracts interest. Samsung, Google, and other major players are reportedly exploring similar wearable AI form factors.

Around the same time, Meta's Ray-Ban smart glasses took the opposite path. The global smart glasses market grew 210% year-over-year in 2024, with Ray-Ban Meta driving much of that growth. By February 2025, cumulative sales had passed 2 million units. Meta plans to scale annual production capacity to roughly 10 million units by the end of 2026, with room to expand further based on demand.

What's striking is the product's deliberately limited ambition. It doesn't try to replace your phone. You can snap photos, listen to music, and ask AI questions. It complements the smartphone rather than competing with it. It's still too early to call it a definitive success, but Meta's commitment to the direction is clear. The Ray-Ban Display, launched in September 2025, added a built-in display and an EMG wristband, and there's already a waitlist.

The contrast between these two products tells a clear story. The AI Pin aimed to replace and sank. The Ray-Ban aimed to complement and is growing.

3. Where the Smartphone Stands Today

To predict the next device, you first need to understand why the smartphone succeeded, and where it's headed.

The smartphone took the phone, the camera, GPS, a game console, and an alarm clock and merged them into a single pocket-sized device. Multitouch screens were intuitive enough to use without a manual. Millions of apps turned it into an infinitely extensible platform. That simple yet powerful combination has kept the smartphone dominant for over two decades.

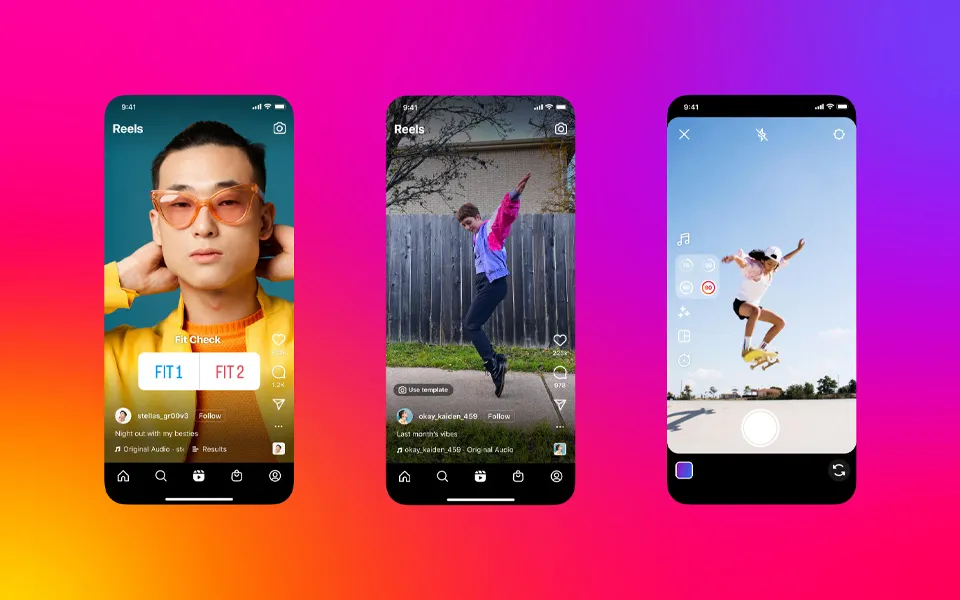

But today, the smartphone's core use case is shifting from productivity tool to content consumption device. Short-form video and streaming services now dominate how we use our phones. The jump from 60Hz to 120Hz refresh rates has made scrolling silkier. OLED panels deliver deeper blacks and richer colors that pull you deeper into video content. Modern smartphone hardware evolution isn't about unlocking new use cases. It's about keeping you in existing ones longer and deeper.

4. Can AR Glasses Replace This Experience?

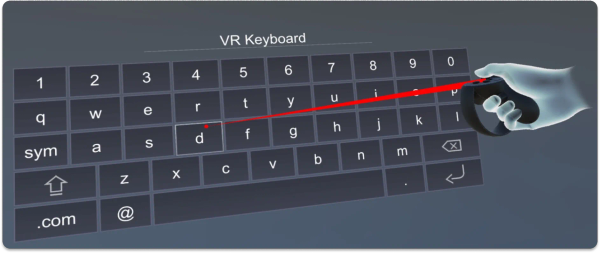

So the question becomes: can AR glasses deliver the kind of display experience that makes content consumption so compelling on smartphones?

The Ray-Ban Display currently offers a 600×600 pixel display with a 20-degree field of view. That's perfectly fine for notifications and turn-by-turn navigation — quick glances at information. But it's nowhere near enough for binge-watching YouTube or endlessly scrolling through Instagram Reels. The experience of flipping through short-form video at 120Hz on a 5.5- to 7-inch high-res OLED screen simply can't be replicated on a tiny display tucked into the corner of a lens.

At the current state of technology, AR glasses realistically cannot replace the smartphone as a content consumption device.

5. Not Replacement, Redefinition

So maybe it's time to flip the framing entirely.

The whole "replacement" lens might be the problem. The smartphone became ubiquitous, but it never replaced the laptop or the PC. Document editing, coding, video production — for real productivity work, PCs remain essential. The smartphone didn't kill the PC; it carved out entirely new territory the PC couldn't reach. The two coexist, not compete.

AR glasses might follow the same pattern. What if we stop thinking of them as a smartphone replacement and start thinking about the experiences their hardware is uniquely suited to deliver?

There are areas where AR glasses already hold a clear advantage. Walking with turn-by-turn directions floating in your line of sight is a fundamentally different experience from pulling out your phone to check a map. Looking at a foreign-language sign and seeing a real-time translation overlay. Asking "What's that building?" and having AI identify it in your field of view. Glancing at a recipe while cooking or referencing a manual while working with your hands. In all of these scenarios, AR glasses feel more natural than a smartphone.

This isn't about creating experiences that demand more of your time. It's about experiences that show up when needed and disappear when they're not. A quick glance at a piece of information, then back to the real world. Smartphones pull you out of reality and into a screen. AR glasses layer information on top of reality. That fundamental difference is what defines the distinct role of each device.

6. What It Means to Wear Something on Your Face

There's a factor that often gets overlooked in discussions about AR glasses: invasiveness.

We already use a pretty invasive device every day. A smartphone is a slab of metal and glass weighing over 150 grams. We grip it, pocket it, and pull it out dozens of times a day. When mobile phones first appeared, plenty of people thought, "Why would I carry that heavy thing around?"

Now we feel anxious leaving the house without one.

But the face is different. You hold a phone in your hand and slip it into your pocket. If it's uncomfortable, you put it down. Glasses sit on your face. That's a different level of commitment. Consider that LASIK and similar procedures are hugely popular, partly because people simply want to stop wearing glasses. AR glasses will need to clear this psychological barrier to reach mass adoption.

It all comes down to the value proposition. Does the device deliver enough value to justify the discomfort? Smartphones overcame the inconvenience of carrying a "brick" by offering overwhelming utility. For AR glasses to overcome the discomfort of wearing a device on your face, they can't just try to replicate what a smartphone already does. They need to deliver value that a smartphone fundamentally cannot.

Closing Thoughts

The early days of any new platform are always accompanied by skepticism and ridicule. Google Glass, Humane, and countless other pioneers experienced exactly that. I have no interest in dismissing their efforts.

But one thing seems clear: if AR glasses try to be a "smartphone killer," they'll likely fail. The technological gap in replacing a high-quality display optimized for content consumption is still too wide. However, if AR glasses can define new user experiences that smartphones simply can't deliver — real-time information overlays, hands-free context, a seamless fusion of the physical and digital — then they have the potential to become an essential device that coexists with the smartphone.

Just as the smartphone coexists with the PC rather than replacing it. Just as the smartwatch carved out health monitoring as its own domain without trying to replace the phone. AR glasses can claim "information at a glance" as their own territory.

Maybe the question we should be asking isn't "What's the next device after the smartphone?" Maybe it's: What's the device that will live on your face, right alongside the phone in your pocket?

References

- Counterpoint Research. (2025), "Global Smart Glasses Market Soars 210% Year-on-Year in 2024 Driven by Ray-Ban Meta Smart Glasses." Link

- The Verge. (2025, January 30), "Meta's Ray-Bans smart glasses sold more than 1 million units in 2024." Link

- UploadVR. (2025, February 13), "Ray-Ban Meta glasses have sold 2 million units, production to be increased."Link

- Reuters. (2025, June 20), "Meta partners with Oakley to launch AI-powered glasses." Link

- The Verge. (2025, February 18), "Humane is shutting down the AI Pin and selling its remnants to HP." Link